Without Changing a Single Workflow

If you’re running analytics on Snowflake, you’re probably spending more on storage than you need to. Most organizations store all their data—active, historical, and reference—in Snowflake-managed storage at $23–$40 per TB per month, regardless of how frequently it’s actually accessed. The opportunity is simple: move warm and cold data to a cheaper storage layer while keeping everything fully queryable through Snowflake’s native features. No workflow changes, no dashboard rewrites, no retraining.

The Hidden Cost of Keeping Everything in Hot Storage

Snowflake’s pricing bundles compute, storage, and data transfer into a single bill. For a typical enterprise deployment, the split looks roughly like this: 75% compute, 18% storage, and 7% data transfer. Storage seems like a small share—until you realize most of that data is sitting idle. Historical records, archived datasets, reference tables, and ingested third-party data are paying hot-tier rates despite being queried infrequently or seasonally.

The solution isn’t to archive data into Glacier or deep storage where it becomes inaccessible. It’s to move it to a storage layer that’s dramatically cheaper but still queryable in real time through Snowflake’s existing external table functionality.

How It Works: Snowflake External Tables + Akave

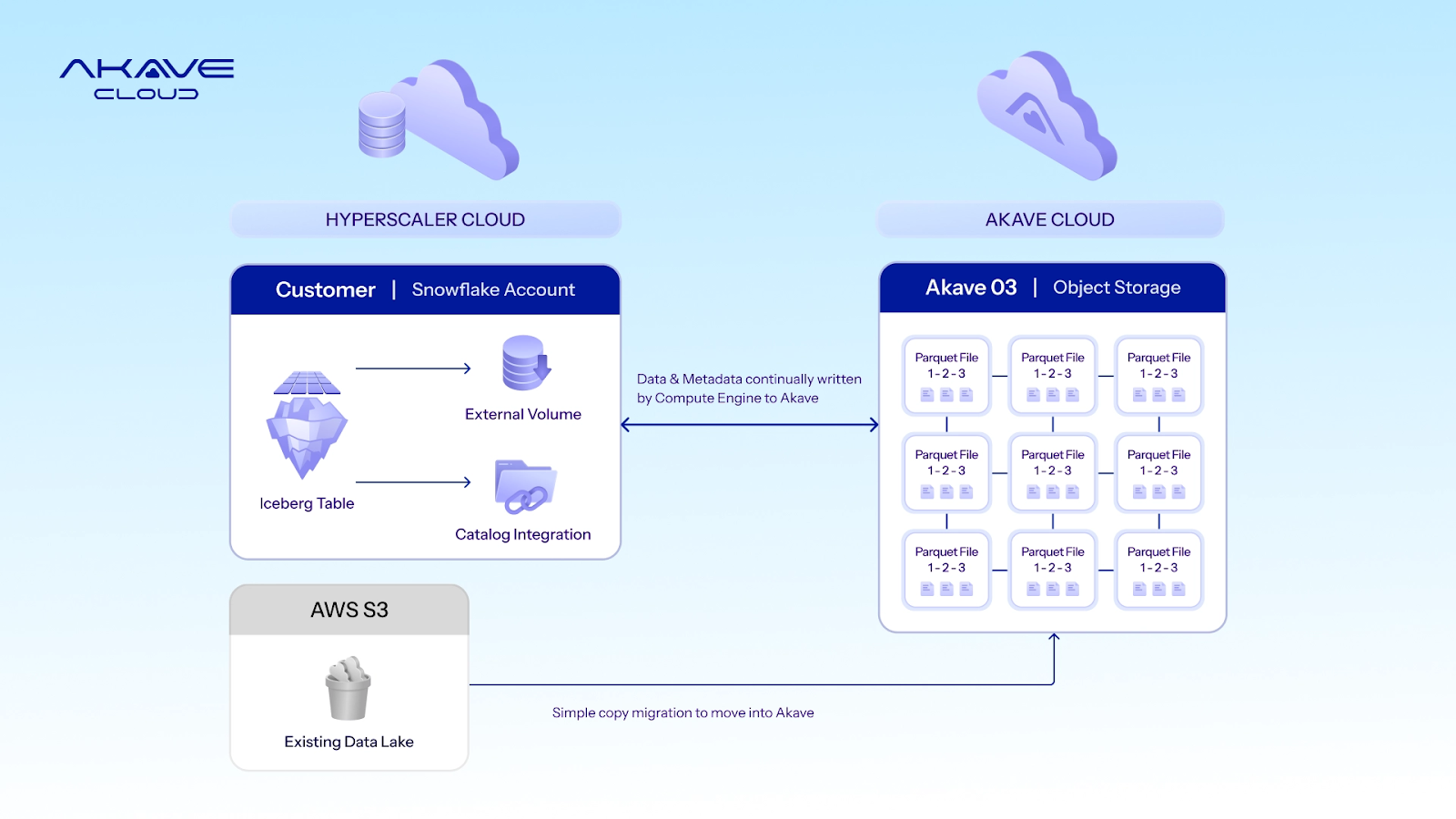

Snowflake already supports external tables backed by S3-compatible object storage. Akave O3 is an S3-compatible storage layer priced at $14.99 per TB per month—a fraction of Snowflake-managed storage. The architecture is straightforward:

Your dashboards, SQL queries, spatial analytics, and automated workflows continue working identically. They query Snowflake; Snowflake reads Parquet files from Akave via external tables. No ETL changes, no code changes, no retraining.

The Economics: More Than 15% Off Your Total Infrastructure Bill

A typical enterprise Snowflake deployment spending $25,000 per month breaks down as follows. Here’s what changes when warm and cold data moves to Akave:

Hot data (active dashboards, real-time feeds) stays in Snowflake-managed storage. Only warm and cold tiers move to Akave. Your existing workflows are completely unaffected.

For data-heavy organizations working with satellite imagery, LiDAR, or large raster archives exceeding 200 TB, annual savings scale to $74,000 or more.

Redirect Storage Savings Into Compute

The $4,211 saved monthly on storage and data transfer equals roughly 1,400 additional Snowflake credits per month at Enterprise pricing. That translates to over 700 extra hours of Medium warehouse compute, approximately 22% more compute capacity at the same total budget, and significantly more headroom for spatial queries, automated workflows, and AI-driven analytics. You’re not just saving money—you’re redirecting budget from idle storage into active compute that drives business value.

Beyond Cost Savings: What Else You Get

Portability Across Clouds and Engines

Today, your data is locked inside a single hyperscaler. Querying the same data from Snowflake and Databricks means duplicating it. Switching clouds means paying massive egress fees. With Akave as a neutral storage layer, one copy of your data is accessible from any engine and any cloud—Snowflake, BigQuery, Databricks, Presto, Trino, or on-prem—simultaneously via standard S3 APIs.

Immutable, Verifiable Data

Every Parquet file stored on Akave receives a SHA-256 content hash and an on-chain CID at write time. For regulated industries—insurance underwriters using risk maps, governments making zoning decisions, telcos optimizing spectrum allocation—this provides cryptographic proof that the data underlying a decision was not altered after the fact. No other storage provider offers this natively at the S3 API level.

Data Sovereignty

Organizations in government, defense, and telecommunications face strict data residency requirements. Akave provides verifiable storage that isn’t locked to a single hyperscaler region while remaining fully accessible through standard S3 APIs.

Where This Fits Best

Any organization with significant volumes of infrequently accessed data in Snowflake is a candidate. The pattern is especially strong in these verticals:

Your Biggest Storage Cost Is Your Biggest Savings Opportunity

If your organization works with raster data—satellite imagery, elevation models, weather grids, LiDAR—those files are typically 10x to 100x larger than vector data and are overwhelmingly cold. They’re ingested once and queried periodically for analysis. When stored in Parquet format (as is increasingly common with modern raster specifications), they’re already in the exact format Akave stores natively and Snowflake reads via Iceberg external tables. No format conversion needed; the files move as-is. For data-intensive organizations, raster archives are likely the single largest line item in the Snowflake storage bill—and the most natural offload candidate.

Getting Started: Four Steps, Less Than a Day

Total integration effort: less than one day for a typical deployment.

FAQs

How do I reduce my Snowflake storage costs without changing workflows?

Move warm and cold data to Akave's S3-compatible storage at $14.99/TB/month while keeping everything queryable through Snowflake external tables. Your dashboards, SQL queries, and automated workflows continue working identically—Snowflake reads Parquet files from Akave via external tables. No ETL changes, no code changes, no retraining required.

What is the difference between Snowflake-managed storage and external tables?

Snowflake-managed storage keeps all data inside Snowflake at $23–$40/TB/month regardless of access frequency. External tables let Snowflake query data stored in S3-compatible object storage like Akave at $14.99/TB/month. Hot data stays in Snowflake-managed storage; warm and cold data moves to external storage while remaining fully queryable.

How much can I save by offloading Snowflake data to external storage?

A typical enterprise Snowflake deployment spending $25,000/month can save approximately $4,200/month (17%) by offloading warm and cold data to Akave—$2,461 on storage plus $1,750 on data transfer (zero egress fees). Annual savings reach $50,000+. For organizations with 200TB+ of raster archives, annual savings scale to $74,000 or more.

Will query performance degrade with Snowflake external tables?

For warm and cold data queried infrequently—historical records, archived campaigns, reference datasets—the difference is negligible. Hot data (active dashboards, real-time feeds) stays in Snowflake-managed storage. You choose what to offload based on access patterns.

How do I migrate data from Snowflake to external storage?

Migration is a simple S3-to-S3 copy with zero downtime. Akave's direct connect to AWS West reduces one-time egress cost from $90/TB to $20/TB. For a typical 50TB offload, migration takes hours, not days. Snowflake continues running throughout. Total integration effort: less than one day for a typical deployment.

Is data on external storage still queryable in real time?

Yes, always. Unlike archiving to Glacier or deep storage, data on Akave is live queryable at all times through Snowflake external tables. No restore wait, no retrieval fee. You get archive-tier pricing with hot-tier accessibility.

What is the best data to offload from Snowflake first?

Start with warm and cold data: historical records, archived datasets, reference tables, and ingested third-party data queried infrequently or seasonally. Raster data (satellite imagery, LiDAR, elevation models) is often the largest storage line item and the most natural offload candidate—typically 10x to 100x larger than vector data.

How do I verify data integrity for compliance and audits?

Every object stored on Akave receives a SHA-256 content hash and an on-chain CID at write time. If a regulator asks whether a dataset is the exact version used for a decision, you have cryptographic proof—not just a log entry. This matters for insurance underwriters, government agencies, and any organization making auditable decisions based on stored data.

Can I query the same data from Snowflake and Databricks without duplication?

Yes. Akave uses the standard S3 API and stores data in open formats (Parquet, Iceberg). The same files are accessible from Snowflake, Databricks, Presto, Flink, Trino, and BigQuery simultaneously. One copy of the data, multiple compute engines—no duplication needed.

Am I trading Snowflake vendor lock-in for another vendor lock-in?

No. Akave uses the standard S3 API. Your data stays in open formats (Parquet, Iceberg). You can copy it back to AWS S3, GCS, or any S3-compatible storage at any time. Zero egress fees mean you can leave whenever you want—exit optionality is built in.

Akave Cloud is an enterprise-grade, distributed and scalable object storage designed for large-scale datasets in AI, analytics, and enterprise pipelines. It offers S3 object compatibility, cryptographic verifiability, immutable audit trails, and SDKs for agentic agents; all with zero egress fees and no vendor lock-in saving up to 80% on storage costs vs. hyperscalers.

Akave Cloud works with a wide ecosystem of partners operating hundreds of petabytes of capacity, enabling deployments across multiple countries and powering sovereign data infrastructure. The stack is also pre-qualified with key enterprise apps such as Snowflake and others.